Squashfs Performance Testing

Experiments

Last week I discovered The Fan Club’s Experiments page. It reminds me of the Debian Experiments community on Google+. I like the concept of trying out all kinds of different things and reporting back to the community on how it works. I’ve been meaning to do more of that myself so this is me jumping in and reporting back on something I’ve been poking at this weekend.

Introducing Squashfs

Squashfs is a read-only compressed filesystem commonly used on embedded devices, Linux installation media and remote file systems (as is done in LTSP). Typically, a system like tmpfs, unionfs or aufs is mounted over this read-only system to make it usable as a root filesystem. It has plenty of other use cases too but for the purposes of this entry we’ll stick with those use cases in mind. It supports gzip, lzo and xz(lzma) as compression back-ends. It also supports block sizes from 4K up to 1M.

Compression technique as well as block size can have major effects on both performance and file size. In most cases the defaults will probably be sufficient, but if you want to find a good balance between performance and space saving, then you’ll need some more insight.

My Experiment: Test effects of squashfs compression methods and block sizes

I’m not the first person to have done some tests on squashfs performance and reported on it. Bernhard Wiedemann and the Squashfs LZMA project have posted some results before, and while very useful I want more information (especially compress/uncompress times). I was surprised to not find a more complete table elsewhere either. Even if such a table existed, I probably wouldn’t be satisfied with it. Each squashfs is different and it makes a big difference whether it contains already compressed information or largely uncompressed information like clear text. I’d rather be able to gather compression ratio/times for a specific image rather than for one that was used for testing purposes once-off.

So, I put together a quick script that takes a squashfs image, extracts it to tmpfs, and then re-compressing it using it all the specified compression techniques and block sizes… and then uncompressing those same images for their read speeds.

My Testing Environment

For this post, I will try out my script on the Ubuntu Desktop 14.04.2 LTS squashfs image. It’s a complex image that contains a large mix of different kinds of files. I’m extracting it to RAM since I want to avoid having disk performance as a significant factor. I’m compressing the data back to SSD and extracting from there for read speed tests. The SSD seems fast enough not to have any significant effect on the tests. If you have a slow storage, the results of the larger images (with smaller block sizes) may be skewed unfavourably.

As Bernhard mentioned on his post, testing the speed of your memory can also be useful, especially when testing on different kinds of systems and comparing the results:

# dd if=/dev/zero of=/dev/null bs=1M count=100000 104857600000 bytes (105 GB) copied, 4.90059 s, 21.4 GB/s

CPU is likely to be your biggest bottleneck by far when compressing. mksquashfs is SMP aware and will use all available cores by default. I’m testing this on a dual core Core i7 laptop with hyper-threading (so squashfs will use 4 threads) and with 16GB RAM apparently transferring around 21GB/s. The results of the squashfs testing script will differ greatly based on the CPU cores, core speed, memory speed and storage speed of the computer you’re running it on, so it shouldn’t come as a surprise if you get different results than I did. If you don’t have any significant bottleneck (like slow disks, slow CPU, running out of RAM, etc) then your results should more or less correspond in scale to mine for the same image.

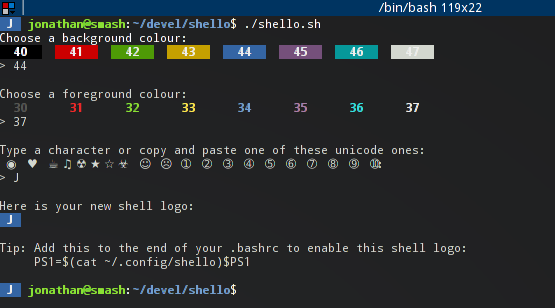

How to Run It

Create a directory and place the filesystem you’d like to test as filesystem.squashfs, then:

$ apt-get install squashfs-tools $ wget https://raw.githubusercontent.com/highvoltage/squashfs-experiments/master/test-mksquashfs.sh $ bash test-mksquashfs.sh

With the default values in that file, you’ll end up with 18 squashfs images taking up about 18GB of disk space. I keep all the results for inspection, but I’ll probably adapt/fix the script to be more friendly to disk space usage some time.

You should see output that look something like this, with all the resulting data in the ./results directory.

* Setting up... - Testing gzip * Running a squashfs using compression gzip, blocksize 4096 * Running a squashfs using compression gzip, blocksize 8192 * Running a squashfs using compression gzip, blocksize 16384 ... - Testing lzo * Running a squashfs using compression lzo, blocksize 4096 * Running a squashfs using compression lzo, blocksize 8192 * Running a squashfs using compression lzo, blocksize 16384 ... - Testing xz * Running a squashfs using compression xz, blocksize 4096 * Running a squashfs using compression xz, blocksize 8192 * Running a squashfs using compression xz, blocksize 16384 ... * Testing uncompressing times... * Reading results/squashfs-gzip-131072.squashfs... * Reading results/squashfs-gzip-16384.squashfs... * Reading results/squashfs-gzip-32768.squashfs... ... * Cleaning up...

On to the Results

The report script will output the results into CSV.

Here’s the table with my results. Ratio is percentage of the size of the original uncompressed data, CTIME and UTIME is compression time and uncompress time for the entire image.

| Filename | Size | Ratio | CTIME | UTIME |

| squashfs-gzip-4096.squashfs | 1137016 | 39.66% | 0m46.167s | 0m37.220s |

| squashfs-gzip-8192.squashfs | 1079596 | 37.67% | 0m53.155s | 0m35.508s |

| squashfs-gzip-16384.squashfs | 1039076 | 36.27% | 1m9.558s | 0m26.988s |

| squashfs-gzip-32768.squashfs | 1008268 | 35.20% | 1m30.056s | 0m30.599s |

| squashfs-gzip-65536.squashfs | 987024 | 34.46% | 1m51.281s | 0m35.223s |

| squashfs-gzip-131072.squashfs | 975708 | 34.07% | 1m59.663s | 0m22.878s |

| squashfs-gzip-262144.squashfs | 970280 | 33.88% | 2m13.246s | 0m23.321s |

| squashfs-gzip-524288.squashfs | 967704 | 33.79% | 2m11.515s | 0m24.865s |

| squashfs-gzip-1048576.squashfs | 966580 | 33.75% | 2m14.558s | 0m28.029s |

| squashfs-lzo-4096.squashfs | 1286776 | 44.88% | 1m36.025s | 0m22.179s |

| squashfs-lzo-8192.squashfs | 1221920 | 42.64% | 1m49.862s | 0m21.690s |

| squashfs-lzo-16384.squashfs | 1170636 | 40.86% | 2m5.008s | 0m20.831s |

| squashfs-lzo-32768.squashfs | 1127432 | 39.36% | 2m23.616s | 0m20.597s |

| squashfs-lzo-65536.squashfs | 1092788 | 38.15% | 2m48.817s | 0m21.164s |

| squashfs-lzo-131072.squashfs | 1072208 | 37.43% | 3m4.990s | 0m20.563s |

| squashfs-lzo-262144.squashfs | 1062544 | 37.10% | 3m26.816s | 0m15.708s |

| squashfs-lzo-524288.squashfs | 1057780 | 36.93% | 3m32.189s | 0m16.166s |

| squashfs-lzo-1048576.squashfs | 1055532 | 36.85% | 3m42.566s | 0m17.507s |

| squashfs-xz-4096.squashfs | 1094880 | 38.19% | 5m28.104s | 2m21.373s |

| squashfs-xz-8192.squashfs | 1002876 | 34.99% | 5m15.148s | 2m1.780s |

| squashfs-xz-16384.squashfs | 937748 | 32.73% | 5m11.683s | 1m47.878s |

| squashfs-xz-32768.squashfs | 888908 | 31.03% | 5m17.207s | 1m43.399s |

| squashfs-xz-65536.squashfs | 852048 | 29.75% | 5m27.819s | 1m38.211s |

| squashfs-xz-131072.squashfs | 823216 | 28.74% | 5m42.993s | 1m29.708s |

| squashfs-xz-262144.squashfs | 799336 | 27.91% | 6m30.575s | 1m16.502s |

| squashfs-xz-524288.squashfs | 778140 | 27.17% | 6m58.455s | 1m20.234s |

| squashfs-xz-1048576.squashfs | 759244 | 26.51% | 7m19.205s | 1m28.721s |

Some notes:

- Even though images with larger block sizes uncompress faster as a whole, they may introduce more latency on live media since a whole block will need to be uncompressed even if you’re just reading just 1 byte from a file.

- Ubuntu uses gzip with a block size of 131072 bytes on it’s official images. If you’re doing a custom spin, you can get improved performance on live media by using a 16384 block size with a sacrifice of around 3% more squashfs image space.

- I didn’t experiment with Xdict-size (dictionary size) for xz compression yet, might be worth while sacrificing some memory for better performance / compression ratio.

- I also want stats for random byte reads on a squashfs, and typical per-block decompression for compressed and uncompressed files. That will give better insights on what might work best on embedded devices, live environments and netboot images (the above table is more useful for large complete reads, which is useful for installer images but not much else), but that will have to wait for another day.

- In the meantime, please experiment on your own images and feel free to submit patches.

I am trying to speed the creation process for filesystem.squashfs and I would like to run mksquashfs using just RAM as the source and destination. Have you done this?

Thanks!

Interesting read.

I have some recent nerding on github with a less traditional usage of squashfs that you might find of value.

https://github.com/devendor/squashlock

I’m sure I reinvented the wheel, but it’s simple and illustrative of some core linux features that are overshadowed by derived application usage.